Event Loop From First Principles: How It Powers Connection Pools

Table of Contents

1. Intro

I want to discuss one of the most elegant ideas in modern backend engineering: the event loop and how it works together with connection pools.

At first, the event loop can sound like one of those abstract runtime concepts that only framework authors need to care about. But once you understand it from first principles, it becomes surprisingly practical. You start seeing it everywhere: web servers, database drivers, message queues, chat systems, proxies, browsers, and even operating systems. This was my experience as well.

The core idea is beautifully simple:

Do not waste a thread waiting for the outside world.

A backend server spends a huge amount of its life waiting: waiting for a database to reply, waiting for Redis, waiting for another HTTP service, waiting for a client etc to send the next chunk of data.

If every wait blocked a full operating-system thread, high-concurrency systems would become painfully expensive. The event loop changes the game by letting a small number of threads coordinate a large number of in-flight I/O operations. Kind of like an ATC at the airport.

In this article i want to discuss its usage with a connection pool:

The event loop coordinates asynchronous I/O readiness.

The connection pool controls access to a limited set of expensive resources.

Together, they allow high concurrency without letting your database catch fire.

In This article I will try to discuss the idea from first principles.

2. Event Loop From First Principles

Picture running a busy little samosa shop entirely by yourself.

Someone orders a fresh batch of fried samosas. You could stand near the stove, stare at the hot oil, and wait until the samosas are ready. But if you do that, the line at the counter keeps growing. Nobody else gets served. The shop feels slow, even though you are technically “working”.

A smarter approach is:

Take the order.

Ask the cook to fry the samosas.

Tell the cook, “Call me the second they are ready.”

Walk back to the counter and serve the next person.

This right here is philosophy of the event loop:

Never waste precious time waiting for someone else.

In software terms, the event loop keeps asking the operating system to Wake it up when a timer fires, a socket becomes readable, a socket becomes writable, or some registered I/O operation has progress..

Then it waits efficiently.

It delegates the waiting to the operating system.

On Linux, this often involves APIs such as epoll. On macOS and BSD systems, you may hear about kqueue. On Windows, the common mechanism is I/O Completion Ports, often abbreviated as IOCP.

The names differ, but the spirit is the same:

Here are the sockets and timers I care about. Please wake me when something interesting happens.

3. The Problem: Waiting Is Expensive

Programs generally do two kinds of work.

3.1. CPU Work

CPU work means the processor is actively doing something:

parsing JSON,

compressing data,

rendering templates,

validating large payloads,

hashing passwords,

running business rules,

crunching numbers.

When CPU work is happening on a thread, that thread is occupied. It cannot execute some other task at the exact same moment.

3.2. I/O Work

I/O work means the program is talking to the outside world:

querying PostgreSQL,

calling another HTTP API,

reading from a socket,

writing to a file,

waiting for Redis,

sending data to Kafka.

For the application, most I/O time is not active computation. It is waiting.

E.g. The database may be using its own CPU and disk. The network packet may be travelling. The remote service may be processing the request. But your application thread is mostly just sitting there.

If a server handles requests in a naive blocking style, one slow database query can hold one whole thread hostage. A thousand slow queries can require a thousand threads.

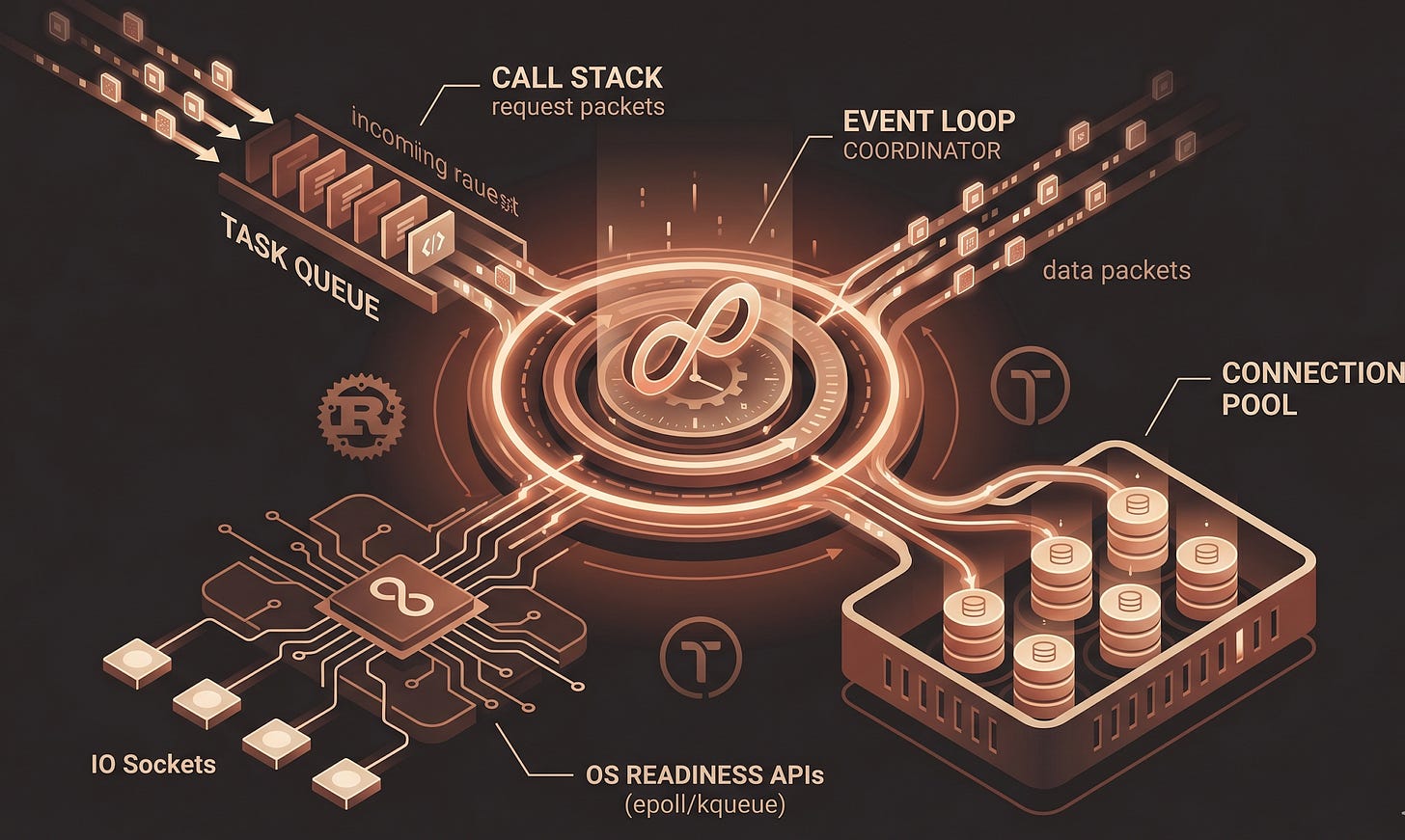

4. The Main Components

If we have to name the moving parts we can say that, the moving parts are -

4.1. Call Stack

The call stack is the “right now” program.

It contains the function calls currently executing. Code on the call stack is synchronous. If you run a CPU-heavy loop here, everything else can get blocked.

4.2. Operating-System Readiness APIs

These are the low-level mechanisms that let a runtime efficiently wait on many I/O resources.

Instead of checking multiple sockets, the runtime depends on the events generated by OS:

This is what makes it possible to coordinate thousands of sockets without thousands of threads.

4.3. Task Queue

When an operation becomes ready, the runtime needs to resume the work that was waiting for it.

In JavaScript, people often talk about callbacks and task queues.

In Rust async, the analogus terms are tasks, futures, and wakers.

The idea is still similar: some suspended unit of work becomes ready to make progress, so the runtime schedules it to be polled again via an event to the event loop.

4.4. Event Loop

The event loop is a coordinator.

It repeatedly:

Runs ready tasks.

Suspends tasks waiting for I/O, timers, or resources.

Registers what each task is waiting for.

Parks the thread if no task is ready.

Wakes up when the OS/runtime signals an event.

Resumes ready tasks.

One important note which I understand very late is it does not spin endlessly.

When the queue is empty, the event loop thread sleeps/parks, saving CPU cycles.

It simply runs ready tasks and waits efficiently when nothing is ready.

5. Futures and async promises

Rust’s async model is a little different from JavaScript’s mental model, but it maps beautifully to event-loop thinking.

In Rust, when you write an async fn, the compiler transforms it into a Future. A future is a value representing work that may not be complete yet.

A simplified view looks like this:

use std::future::Future;

use std::pin::Pin;

use std::task::{Context, Poll};

trait SimpleFuture {

type Output;

fn poll(self: Pin<&mut Self>, cx: &mut Context<’_>) -> Poll<Self::Output>;

}

A future does not necessarily run immediately when it is created.

When it cannot complete yet, maybe because it is waiting for a socket or timer or some saperate thread, it returns:

Poll::Pending

But before returning Pending, It use waker (passed as ctx) and register this task in a list of parked tasks.

This makes the thread to check other tasks.

When the future can complete immediately, it returns:

Poll::Ready(value)

Then using the waker ctx it calles waker.wake() which move this task from parked taskes list to task to run queue which is listened to by the event loop.

6. Example: Many Tasks, Few Threads

For this example i will use Tokio it is one of the most widely used asynchronous runtimes in Rust.

It provides an event loop, async task scheduling, timers, async networking, and utilities for coordination.

Here is a small example:

use tokio::time::{sleep, Duration};

#[tokio::main]

async fn main() {

let a = tokio::spawn(handle_request(”A”, 800));

let b = tokio::spawn(handle_request(”B”, 200));

let c = tokio::spawn(handle_request(”C”, 500));

let _ = tokio::join!(a, b, c);

}

async fn handle_request(name: &’static str, delay_ms: u64) {

println!(”request {name}: received”);

// This does not block the OS thread.

// The task is suspended and Tokio wakes it after the timer fires.

sleep(Duration::from_millis(delay_ms)).await;

println!(”request {name}: finished after {delay_ms}ms”);

}

The important line is this one:

sleep(Duration::from_millis(delay_ms)).await;

That .await does not mean “block the thread until the timer completes” The await gets called on Future (produced by async function) that we discuss in earlier section, it create and snapsot of the current

execution state in heap and frees the thread to work on other tasks.

Execution state here means all the local variables that were created until now in the thread in action, which might be useful for further computation once the waiting ends.

So request B can finish before request A even though A was spawned first. The runtime is not married to the order in which tasks began. It reacts to whichever task becomes ready next.

7. Event Loop in Server Applications

Now imagine an HTTP server.

Three requests arrive almost together:

Request A needs a database query.

Request B needs a Redis lookup.

Request C needs to call another internal service.

A blocking server might assign a thread to each request and let each thread wait.

An async server behaves differently:

It accepts Request A.

It starts the database query and suspends A (until waker gets called for this task which will add it to queue handled by event loop).

It accepts Request B.

It starts the Redis lookup and suspends B. (until waker gets called for this task which will add it to queue handled by event loop)

It accepts Request C.

It starts the HTTP call and suspends C. (until waker gets called for this task which will add it to queue handled by event loop)

It keeps accepting more work.

When any I/O operation becomes ready, the corresponding task resumes.

The event loop is fluid. It processes whichever operation is ready first, not necessarily whichever operation started first.

7.1. Note Do Not Block the Event Loop

The event loop is brilliant for I/O-heavy workloads, but it is terrible if you abuse it with CPU-heavy work.

This is bad inside an async request handler:

async fn bad_handler() {

// Imagine this takes several seconds.

let mut total = 0u64;

for i in 0..5_000_000_000u64 {

total = total.wrapping_add(i);

}

println!(”{total}”);

}

Why is this bad?

Because there is no .await inside the loop. The task does not yield.

The thread will continue working this heavy task

The runtime cannot use that thread to poll other tasks while this CPU loop is running.

For CPU-heavy work, move it to a blocking thread pool or a dedicated worker system:

async fn better_handler() -> Result<u64, tokio::task::JoinError> {

let result = tokio::task::spawn_blocking(|| {

let mut total = 0u64;

for i in 0..5_000_000_000u64 {

total = total.wrapping_add(i);

}

total

})

.await?;

Ok(result)

}

spawn_blocking tells Tokio:

This work may block or burn CPU. Please do not run it on the core async worker thread.

8. Connection Pools

Now let us add connection pools.

Opening a fresh database connection is expensive. It may involve:

DNS resolution,

TCP handshake,

TLS negotiation,

authentication,

database session setup,

server-side memory allocation.

Doing this for every request would be brutal for latency and database stability.

So instead, applications usually maintain a small set of warm, reusable connections.

That set is the connection pool.

9. How Can One Event Loop Handle Many DB Connections?

A database connection is usually a network socket.

If your pool has 50 database connections, the runtime does not need 50 threads just to watch them. It can register interest in all 50 sockets with the operating system.

Conceptually, the runtime says:

Here are 50 sockets. Wake me when any of them can be read from or written to.

When PostgreSQL sends data back on connection 17, the OS tells the runtime that socket 17 is ready. The runtime then wakes the task that was waiting for that query result which add this to run queue (listned by event loop). later consumer by event loop

The event loop is not executing the database query. PostgreSQL is executing the query. The event loop is coordinating the client-side waiting.

That distinction matters a lot.

10. Concurrency vs Parallelism

10.1. Concurrency

Concurrency means handling multiple tasks over the same period of time.

One chef manages the stove, oven, prep station, and counter by switching attention intelligently.

That is the event loop.

10.2. Parallelism

Parallelism means doing multiple things at the exact same instant.

Three chefs are physically working at the same time.

That is multi-threading or multi-processing.

Async Rust can use both.

Tokio can run many concurrent tasks, and its multi-threaded runtime can execute tasks in parallel across worker threads. But the conceptual power of async I/O is that tasks do not need one dedicated thread each while they are waiting.

11. Summary

The event loop lets us write highly concurrent systems without burning threads on pointless waiting.

It works because most backend work is I/O-heavy. When a task waits for a socket, timer, database response, or remote service, the runtime suspends that task and lets the thread do other useful work.

Connection pools fit perfectly into this model.

The pool manages a limited set of expensive database connections. If a connection is available, a request uses it. If not, the request waits asynchronously in a queue. That queue is not just an implementation detail; it is backpressure. It protects the database from being overwhelmed by unlimited concurrency.

In Rust, async/await makes this style pleasant while still being explicit about where suspension can happen. Tokio provides the runtime machinery: task scheduling, timers, async sockets, and integration with OS readiness APIs.

The philosophy underneath all of this is simple and very natural:

Do not stand around staring at the stove. Start the work, leave a note, serve the next person, and come back when the samosas are ready.

That is the event loop.

Thanks for reading.